5 October 2021

The Royal Swedish Academy of Sciences has decided to award the Nobel Prize in Physics 2021

“for groundbreaking contributions to our understanding of complex physical systems”

with one half jointly to

Syukuro Manabe

Princeton University, USA

Klaus Hasselmann

Max Planck Institute for Meteorology, Hamburg, Germany

“for the physical modelling of Earth’s climate, quantifying variability and reliably predicting global warming”

and the other half to

Giorgio Parisi

Sapienza University of Rome, Italy

“for the discovery of the interplay of disorder and fluctuations in physical systems from atomic to planetary scales”

They found hidden patterns in the climate and in other complex phenomena

Three Laureates share this year’s Nobel Prize in Physics for their studies of complex phenomena.

Syukuro Manabe and Klaus Hasselmann laid the foundation of our knowledge of the Earth’s climate

and how humanity influences it. Giorgio Parisi is rewarded for his revolutionary contributions to the

theory of disordered and random phenomena.

All complex systems consist of many different interacting parts. They have been studied by physicists for a couple of centuries, and can be difficult to describe mathematically – they may have an enormous number of components or be governed by chance. They could also be chaotic, like the weather, where small deviations in initial values result in huge differences at a later stage. This year’s Laureates have all contributed to us gaining greater knowledge of such systems and their long-term development.

The Earth’s climate is one of many examples of complex systems. Manabe and Hasselmann are awarded the

Nobel Prize for their pioneering work on developing climate models. Parisi is rewarded for his theoretical

solutions to a vast array of problems in the theory of complex systems.

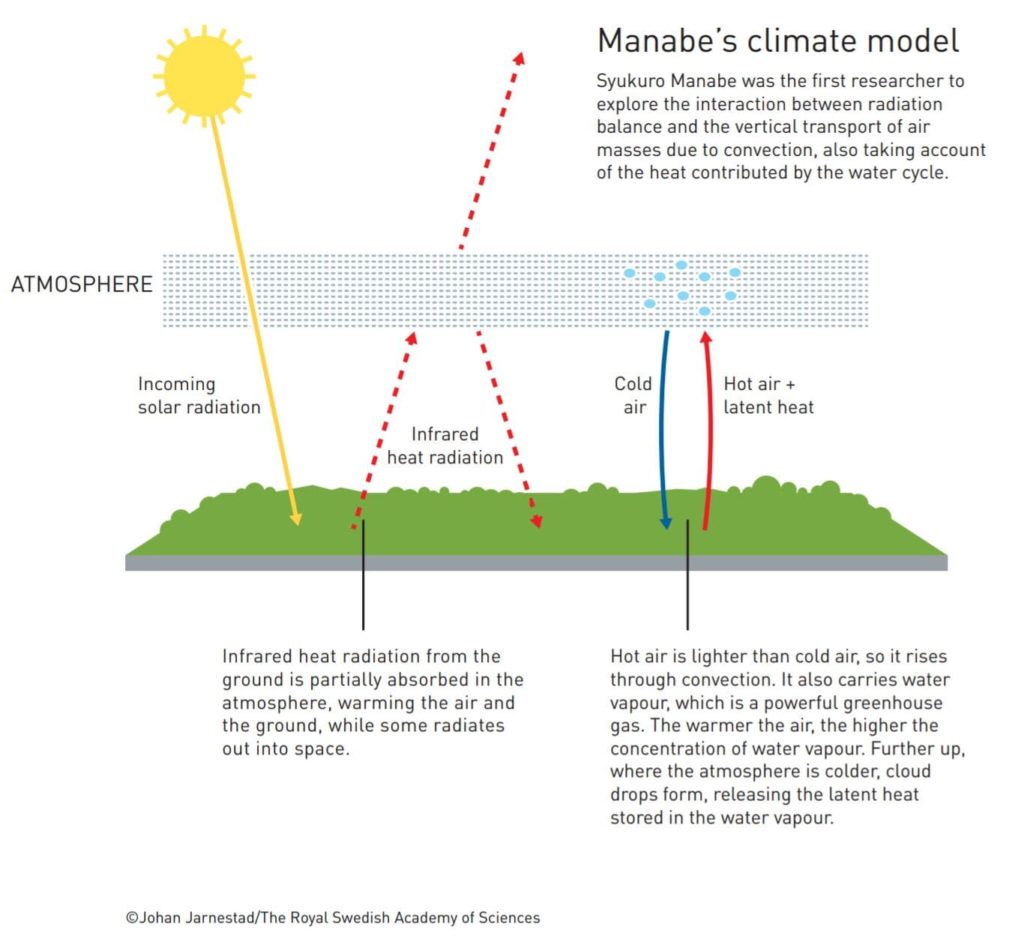

Syukuro Manabe demonstrated how increased concentrations of carbon dioxide in the atmosphere lead to increased temperatures at the surface of the Earth. In the 1960s, he led the development of physical models of the Earth’s climate and was the first person to explore the interaction between radiation balance and the vertical transport of air masses. His work laid the foundation for the development of climate models.

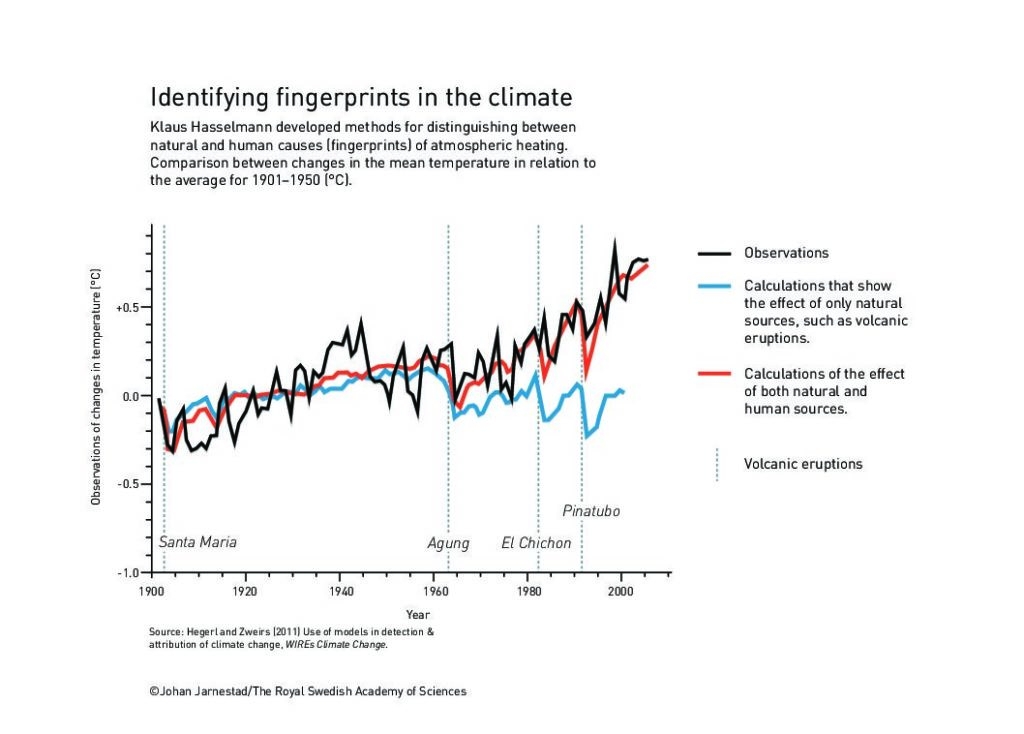

About ten years later, Klaus Hasselmann created a model that links together weather and climate, thus answering the question of why climate models can be reliable despite weather being changeable and chaotic. He also developed methods for identifying specific signals, fingerprints, that both natural phenomena and human activities imprint in the climate. His methods have been used to prove that the increased temperature in the atmosphere is due to human emissions of carbon dioxide.

Around 1980, Giorgio Parisi discovered hidden patterns in disordered complex materials. His discoveries

are among the most important contributions to the theory of complex systems. They make it possible

to understand and describe many different and apparently entirely random complex materials and

phenomena, not only in physics but also in other, very different areas, such as mathematics, biology, neuroscience, and machine learning.

The greenhouse effect is vital to life

Two hundred years ago, French physicist Joseph Fourier studied the energy balance between the sun’s radiation towards the ground and the radiation from the ground. He understood the atmosphere’s role in this balance; at the Earth’s surface, the incoming solar radiation is transformed into outgoing radiation – “dark heat” – which is absorbed by the atmosphere, thus heating it. The atmosphere’s protective role is now called the greenhouse effect. This name comes from its similarity to the glass panes of a greenhouse, which allow through the heating rays of the sun, but trap the heat inside. However, the radiative processes in the atmosphere are far more complicated.

The task remains the same as that undertaken by Fourier – to investigate the balance between the shortwave solar radiation coming towards our planet and Earth’s outgoing longwave, infrared radiation. The details were added by many climate scientists over the following two centuries. Contemporary climate models are incredibly powerful tools, not only for understanding the climate but also for understanding the global heating for which humans are responsible.

These models are based on the laws of physics and have been developed from models that were used to predict the weather. Weather is described by meteorological quantities such as temperature, precipitation, wind or clouds, and is affected by what happens in the oceans and on land. Climate models are based upon the weather’s calculated statistical properties, such as average values, standard deviations, highest and lowest measured values, etcetera. They cannot tell us what the weather will be in Stockholm on 10 December next year, but we can get some idea of what temperature or how much rainfall we can expect on average in Stockholm in December.

Establishing the role of carbon dioxide

The greenhouse effect is essential for life on Earth. It governs temperature because the greenhouse

gases in the atmosphere – carbon dioxide, methane, water vapor, and other gases – first absorb the

Earth’s infrared radiation and then release this absorbed energy, heating up the surrounding air and

the ground below it.

Greenhouse gases actually comprise a very small proportion of the Earth’s dry atmosphere, which is

largely nitrogen and oxygen – these are 99 percent by volume. Carbon dioxide is just 0.04 percent

by volume. The most powerful greenhouse gas is water vapor, but we cannot control the concentration

of water vapor in the atmosphere, while we can control that of carbon dioxide.

The amount of water vapor in the atmosphere is highly dependent on temperature, leading to a feedback mechanism. More carbon dioxide in the atmosphere makes it warmer, allowing more water vapor

to be held in the air, which increases the greenhouse effect and makes temperatures rise even further. If

the carbon dioxide level drops, some of the water vapor will condense and the temperature will fall.

An important first piece of the puzzle about the impact of carbon dioxide came from Swedish researcher and Nobel Laureate Svante Arrhenius. Incidentally, it was his colleague, meteorologist Nils Ekholm who, in 1901, was the first to use the word greenhouse in describing the atmosphere’s storage and re-radiation of heat.

Arrhenius understood the physics responsible for the greenhouse effect by the end of the 19th century – that

outgoing radiation is proportional to the radiant body’s absolute temperature (T) to the power of four (T⁴).

The hotter the source of the radiation, the shorter the rays’ wavelength. The Sun has a surface temperature

of 6,000°C and primarily emits rays in the visible spectrum. Earth, with a surface temperature of just 15°C, re-radiates infrared radiation that is invisible to us. If the atmosphere did not absorb this radiation, the surface temperature would barely exceed –18°C.

Arrhenius was actually attempting to work out what caused the recently discovered phenomenon of ice

ages. He arrived at the conclusion that if the level of carbon dioxide in the atmosphere halved, this would

be enough for the Earth to enter a new ice age. And vice versa – a doubling of the amount of carbon

dioxide would increase the temperature by 5–6°C, a result which, somewhat fortuitously, is astoundingly

close to current estimates.

Pioneering model for the effect of carbon dioxide

In the 1950s, Japanese atmospheric physicist Syukuro Manabe was one of the young and talented

researchers in Tokyo who left Japan, which had been devastated by war and continued their careers

in the US. The aim of Manabes’s research, like that of Arrhenius around seventy years earlier, was to

understand how increased levels of carbon dioxide can cause increased temperatures. However, while

Arrhenius had focused on radiation balance, in the 1960s Manabe led work on the development of

physical models to incorporate the vertical transport of air masses due to convection, as well as the

latent heat of water vapor.

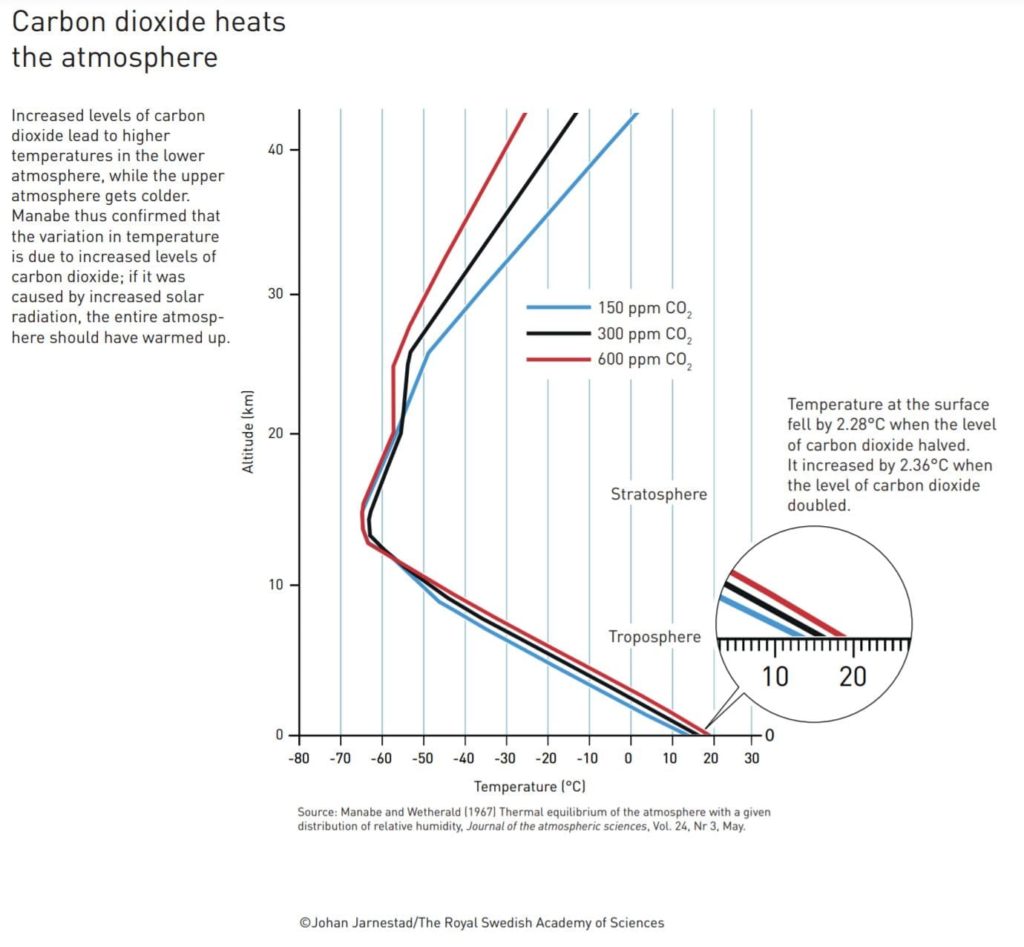

To make these calculations manageable, he chose to reduce the model to one dimension – a vertical

column, 40 kilometers up into the atmosphere. Even so, it took hundreds of valuable computing

hours to test the model by varying the levels of gases in the atmosphere. Oxygen and nitrogen had

negligible effects on surface temperature, while carbon dioxide had a clear impact: when the level of

carbon dioxide doubled, global temperature increased by over 2°C.

The model confirmed that this heating really was due to the increase in carbon dioxide because it predicted rising temperatures closer to the ground while the upper atmosphere got colder. If variations in solar radiation were responsible for the increase in temperature instead, the entire atmosphere should have been heating at the same time.

Sixty years ago, computers were hundreds of thousands of times slower than they are now, so this model was relatively simple, but Manabe got the key features right. You must always simplify, he says. You cannot compete with the complexity of nature – there is so much physics involved in every raindrop that it would never be possible to compute absolutely everything. The insights from the onedimensional model led to a climate model in three dimensions, which Manabe published in 1975; this was yet another milestone on the road to understanding the climate’s secrets.

Weather is chaotic

About ten years after Manabe, Klaus Hasselmann succeeded in linking together weather and climate by finding a way to outsmart the rapid and chaotic weather changes that were so troublesome for calculations. Our planet has vast shifts in its weather because solar radiation is so unevenly distributed, both geographically and over time. Earth is round, so fewer of the sun’s rays reach the higher latitudes than the lower ones around the Equator. Not only this but the Earth’s axis is tilted, producing seasonal differences in incoming radiation. The differences in density between warmer and colder air cause the colossal transports of heat between different latitudes, between ocean and land, between higher and lower air masses, which drive the weather on our planet.

As we all know, making reliable predictions about the weather for more than the next ten days is a challenge. Two hundred years ago, the renowned French scientist, Pierre-Simon de Laplace, stated that if we just knew the position and speed of all the particles in the universe, it should be possible to both calculate what has happened and what will happen in our world. In principle, this should be true; Newton’s three-century-old laws of motion, which also describe air transport in the atmosphere, are entirely deterministic – they are not governed by chance.

However, nothing could be more wrong when it comes to the weather. This is partly because, in practice, it is impossible to be precise enough – to state the air temperature, pressure, humidity, or wind conditions for every point in the atmosphere. Also, the equations are nonlinear; small deviations in initial values can make a weather system evolve in entirely different ways. Based on the question of whether a butterfly flapping its wings in Brazil could cause a tornado in Texas, the phenomenon was named the butterfly effect. In practice, this means that it is impossible to produce long-term weather forecasts – the weather is chaotic; this discovery was made in the 1960s by the American meteorologist Edward Lorenz, who laid the foundation of today’s chaos theory.

Making sense of noisy data

How can we produce reliable climate models for several decades or hundreds of years into the future, despite the weather being a classic example of a chaotic system? Around 1980, Klaus Hasselmann demonstrated how chaotically changing weather phenomena can be described as rapidly changing noise, thus placing long-term climate forecasts on a firm scientific foundation. Furthermore, he developed methods for identifying the human impact on the observed global temperature.

As a young doctoral student in physics in Hamburg, Germany, in the 1950s, Hasselmann worked on fluid dynamics, then began to develop observations and theoretical models for ocean waves and currents. He moved to California and continued with oceanography, meeting colleagues such as Charles David Keeling, with whom the Hasselmanns started a madrigal choir. Keeling is legendary for beginning, back in 1958, what is now the longest series of atmospheric carbon dioxide measurements at the Mauna Loa Observatory in Hawaii. Little did Hasselmann know that in his later work he would regularly use the Keeling Curve, which shows changes in the carbon dioxide levels.

Obtaining a climate model from noisy weather data can be illustrated by walking a dog: the dog runs off the lead, backward and forwards, side to side, and around your legs. How can you use the dog’s tracks to see whether you are walking or standing still? Or whether you are walking quickly or slowly? The dog’s tracks are the changes in the weather, and your walk is the calculated climate. Is it even possible to draw conclusions about long-term trends in the climate using chaotic and noisy weather data?

One additional difficulty is that the fluctuations that influence the climate are extremely variable over time – they may be rapid, such as in wind strength or air temperature, or very slow, such as melting ice sheets and warming oceans. For example, uniform heating by just one degree can take a thousand years for the ocean, but just a few weeks for the atmosphere. The decisive trick was incorporating the rapid changes in the weather into the calculations as noise, and showing how this noise affects the climate.

Hasselmann created a stochastic climate model, which means that chance is built into the model. His inspiration came from Albert Einstein’s theory of Brownian motion, also called a random walk. Using this theory, Hasselmann demonstrated that the rapidly changing atmosphere can actually cause slow variations in the ocean.

Discerning traces of human impact

Once the model for climate variations was finished, Hasselmann developed methods for identifying the human impact on the climate system. He found that the models, along with observations and theoretical considerations, contain adequate information about the properties of noise and signals. For example, changes in solar radiation, volcanic particles, or levels of greenhouse gases leave unique signals, fingerprints, which can be separated out. This method for identifying fingerprints can also be applied to the effect that humans have on the climate system. Hasselman thus cleared the way to further studies of climate change, which have demonstrated traces of human impact on the climate using a large number of independent observations.

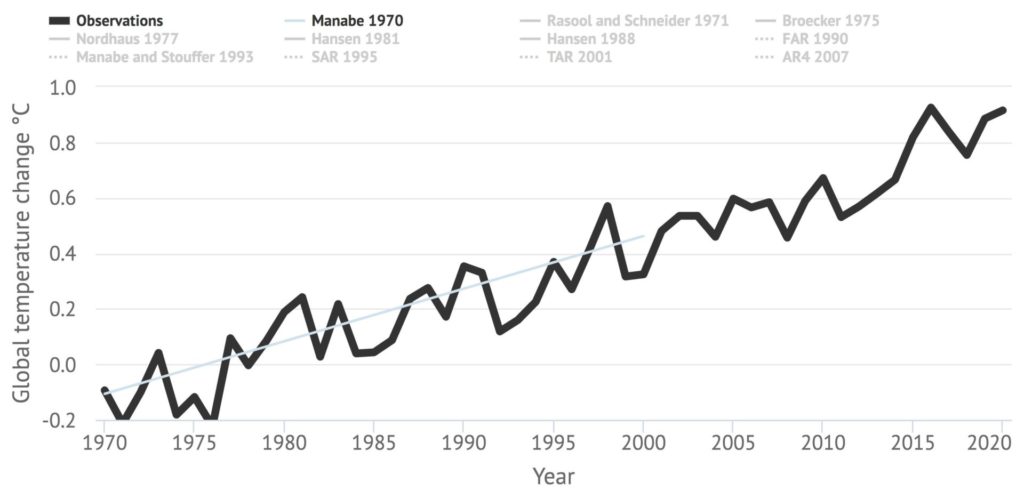

Climate models have become increasingly refined as the processes included in the climate’s complicated interactions are mapped more thoroughly, not least through satellite measurements and weather observations. The models clearly show an accelerating greenhouse effect; since the mid-19th century, the levels of carbon dioxide in the atmosphere have increased by 40 percent. Earth’s atmosphere has not contained this much carbon dioxide for hundreds of thousands of years. Accordingly, temperature measurements show that the world has heated by 1°C over the past 150 years.

Syukuro Manabe and Klaus Hasselmann have contributed to the greatest benefit for humankind, in the spirit of Alfred Nobel, by providing a solid physical foundation for our knowledge of Earth’s climate. We can no longer say that we did not know – the climate models are unequivocal. Is Earth heating up? Yes. Is the cause the increased amounts of greenhouse gases in the atmosphere? Yes. Can this be explained solely by natural factors? No. Are humanity’s emissions the reason for the increasing temperature? Yes.

Methods for disordered systems

Around 1980, Giorgio Parisi presented his discoveries about how apparently random phenomena are governed by hidden rules. His work is now considered to be among the most important contributions to the theory of complex systems.

Modern studies of complex systems are rooted in the statistical mechanics developed in the second half of the 19th century by James C. Maxwell, Ludwig Boltzmann, and J. Willard Gibbs, who named this field in 1884. Statistical mechanics evolved from the insight that a new type of method was necessary for describing systems, such as gases or liquids, that consist of large numbers of particles. This method had to take the particles’ random movements into account, so the basic idea was to calculate the particles’ average effect instead of studying each particle individually. For example, the temperature in a gas is a measure of the average value of the energy of the gas particles. Statistical mechanics is a great success because it provides a microscopic explanation for macroscopic properties in gases and liquids, such as temperature and pressure.

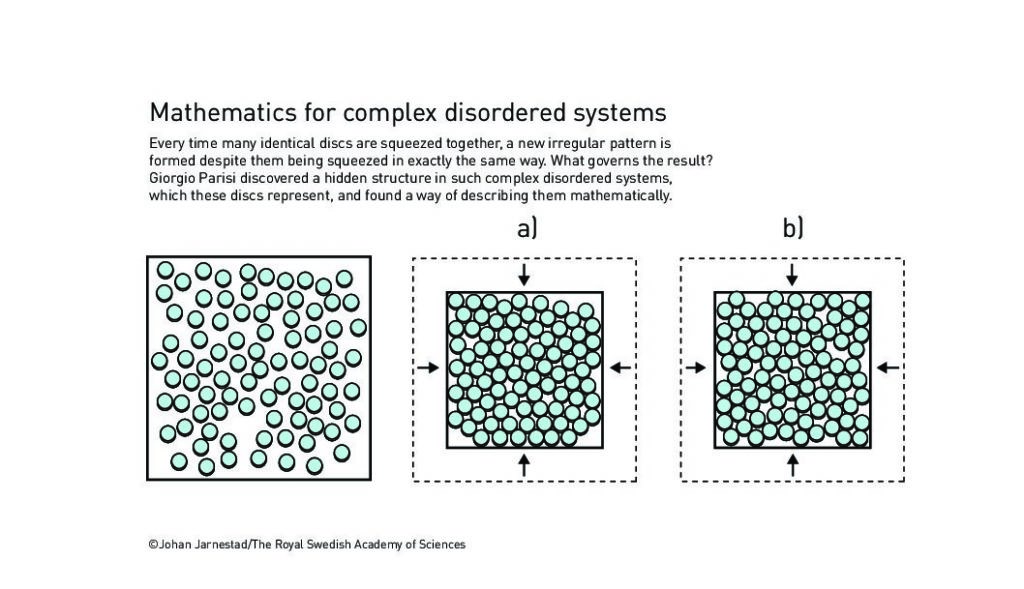

The particles in a gas can be regarded as tiny balls, flying around at speeds that increase with higher temperatures. When the temperature drops, or pressure increases, the balls first condense into a liquid and then into a solid. This solid is often a crystal, where the balls are organized in a regular pattern. However, if this change happens rapidly, the balls may form an irregular pattern that does not change even if the liquid is further cooled or squeezed together. If the experiment is repeated, the balls will assume a new pattern, despite the change happening in exactly the same way. Why are the results different?

Understanding complexity

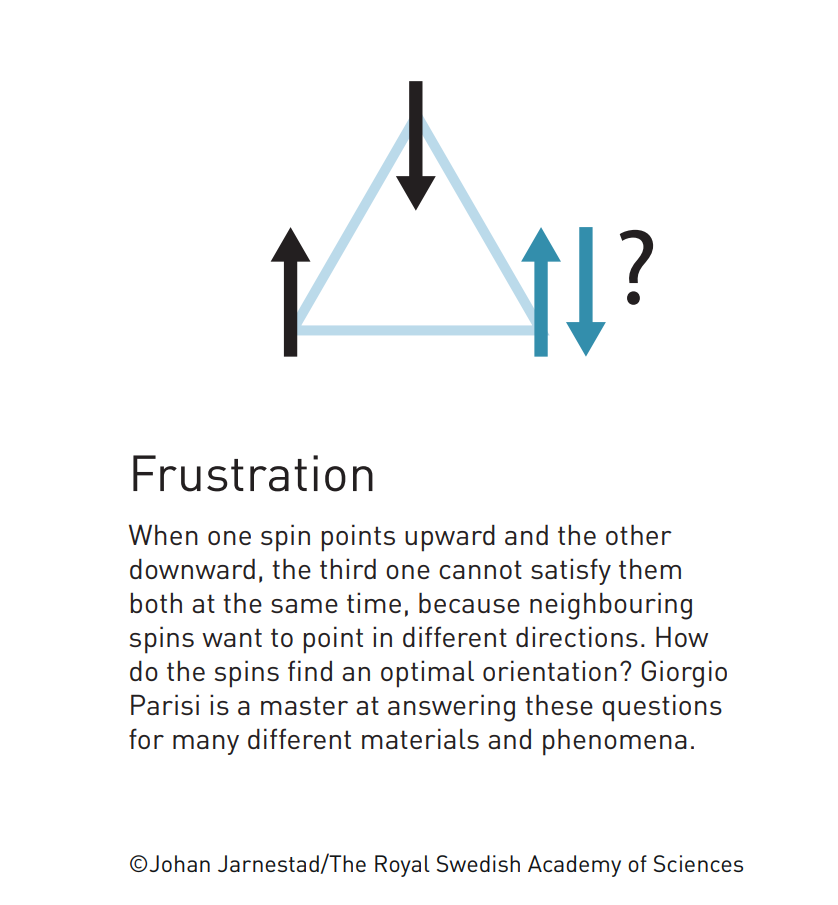

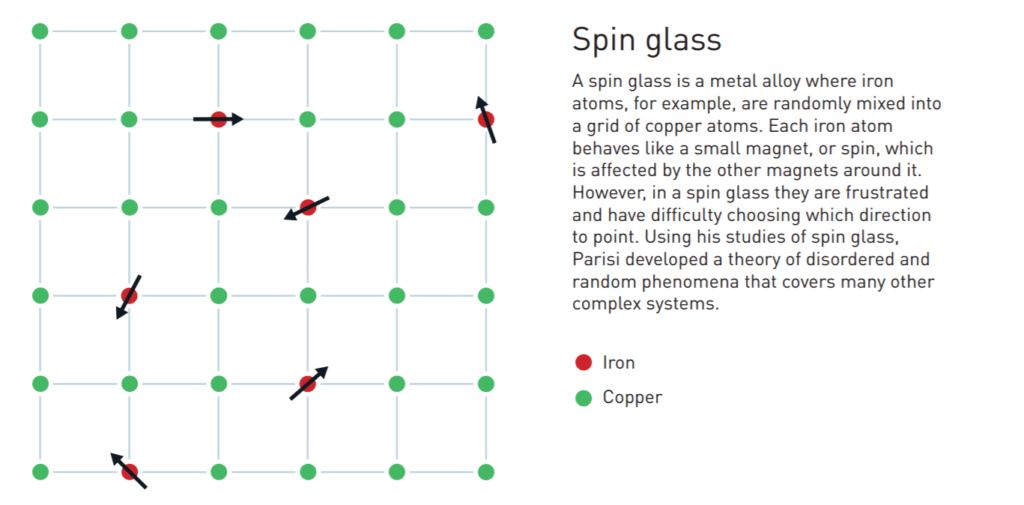

These compressed balls are a simple model for ordinary glass and for granular materials, such as sand or gravel. However, the subject of Parisi’s original work was a different kind of system – spin glass. This is a special type of metal alloy in which iron atoms, for example, are randomly mixed into a grid of copper atoms. Even though there are only a few iron atoms, they change the material’s magnetic properties in a radical and very puzzling manner. Each iron atom behaves like a small magnet, or spin, which is affected by the other iron atoms close to it. In an ordinary magnet, all the spins point in the same direction, but in a spin glass they are frustrated; some spin pairs want to point in the same direction and others in the opposite direction – so how do they find an optimal orientation?

In the introduction to his book about spin glass, Parisi writes that studying spin glass is like watching

the human tragedies of Shakespeare’s plays. If you want to make friends with two people at the same

time, but they hate each other, it can be frustrating. This is, even more, the case in a classical tragedy, where

strongly emotional friends and enemies meet on stage. How can the tension in the room be minimized?

Spin glasses and their exotic properties provide a model for complex systems. In the 1970s, many

physicists, including several Nobel Laureates, searched for a way to describe the mysterious and

frustrating spin glasses. One method they used was the replica trick, a mathematical technique in which

many copies, replicas, of the system are processed at the same time. However, in terms of physics, the results of the original calculations were unfeasible.

In 1979, Parisi made a decisive breakthrough when he demonstrated how the replica trick could be ingeniously used to solve a spin glass problem. He discovered a hidden structure in the replicas and found a way to describe it mathematically. It took many years for Parisi’s solution to be proven mathematically correct. Since then, his method has been used in many disordered systems and become a cornerstone of the theory of complex systems.

The fruits of frustration are many and varied

Both spin glass and granular materials are examples of frustrated systems, in which various constituents must arrange themselves in a manner that is a compromise between counteracting forces. The question is how they behave and what the results are. Parisi is a master at answering these questions for many different materials and phenomena. His fundamental discoveries about the structure of spin glasses were so deep that they not only influenced physics, but also mathematics, biology, neuroscience, and machine learning because all these fields include problems that are directly related to frustration.

Parisi has also studied many other phenomena in which random processes play a decisive role in how structures are created and how they develop and dealt with questions such as: Why do we have periodically recurring ice ages? Is there a more general mathematical description of chaos and turbulent systems? Or – how do patterns arise in a murmuration of thousands of starlings? This question may seem far removed from the spin glass. However, Parisi has said that most of his research has dealt with how simple behaviors give rise to complex collective behaviors, and this applies to both spin glasses and starlings.

“The discoveries being recognized this year demonstrate that our knowledge about the climate rests on a solid scientific foundation, based on rigorous analysis of observations. This year’s Laureates have all contributed to us gaining deeper insight into the properties and evolution of complex physical systems,” says Thors Hans Hansson, chair of the Nobel Committee for Physics.

Syukuro Manabe, born 1931 in Shingu, Japan. Ph.D. 1957 from University of Tokyo, Japan. Senior Meteorologist at Princeton University, USA.

Klaus Hasselmann, born 1931 in Hamburg, Germany. Ph.D. 1957 from University of Göttingen, Germany. Professor, Max Planck Institute for Meteorology, Hamburg, Germany.

Giorgio Parisi, born 1948 in Rome. Italy. Ph.D. 1970 from Sapienza University of Rome, Italy. Professor at Sapienza University of Rome, Italy

Prize amount: 10 million Swedish kronor, with one half jointly to Syukuro Manabe and Klaus Hasselmann and the other half to Giorgio Parisi

Further information: www.kva.se and www.nobelprize.org

44 Comments

Pingback: bossa nova piano

Pingback: Stapelstein Balance Board Schemerblauw

Pingback: book hotel

Pingback: cat8888

Pingback: thewin888

Pingback: บุญมี สล็อต

Pingback: สมัครสล็อต true wallet

Pingback: หวย

Pingback: แทงบอลกับ LSM99 ระบบทันสมัย

Pingback: Graphics

Pingback: เค้กด่วน

Pingback: ยางไดอะแฟรม

Pingback: REC1688

Pingback: ค่ายคาสิโนออนไลน์

Pingback: ricky casino

Pingback: แว่น Dior

Pingback: Aviator

Pingback: mostbet

Pingback: ร้านดอกไม้

Pingback: How to pray for spiritual growth

Pingback: เช็คสลิปโอนเงิน

Pingback: ชุดกระชับสัดส่วน

Pingback: โรงแรมศรีราชา

Pingback: EV Charger

Pingback: ดูแลผู้สูงอายุ

Pingback: ระบบ CRM

Pingback: processor for gaming hyderabad

Pingback: 365winJoker

Pingback: ร้านตัดแว่น ใกล้ฉัน

Pingback: strongest psilocybin mushrooms

Pingback: 7 plus slot สมัครสมาชิกง่าย

Pingback: ตรายางออนไลน์

Pingback: ร้านเค้กวันเกิดใกล้ฉัน

Pingback: ดูหนังออนไลน์ฟรี

Pingback: essentials

Pingback: ดูหนังออนไลน์ฟรี

Pingback: silicone dolls reborn dolls

Pingback: ข่าวสารฟุตบอล

Pingback: sa789

Pingback: BAUC11

Pingback: ชุดเครื่องเสียง

Pingback: Sizzling Hot demo

Pingback: 123autov2

Pingback: clothing manufacturer